True or false? There is almost always only ONE and BEST way to solve a customer problem.

Reveal the answer by conducting a Kano study.

What Is Kano & Why Choose Kano

To reduce the risk of building the wrong solution, I recommend conducting a Kano study toward the middle of your design process. Do this just after you’ve identified the customer problem(s) to solve, but before you’ve launched into validation testing (e.g., usability).

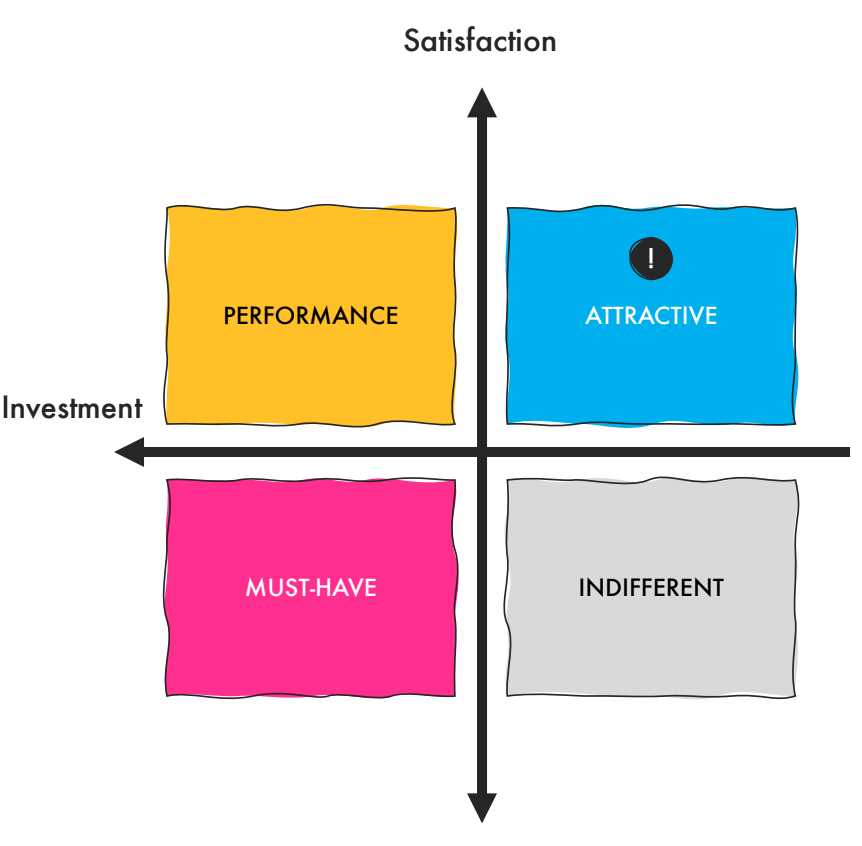

What’s a Kano study? Kano (pronounced: kah-no) is a research method that analyzes customer emotions by categorizing product features into 4 quadrants: Must-Have, Performance/One-Dimensional, Attractive/Delighters, and Indifferent. It’s named after its creator, economist Noriaki Kano. (See Wikipedia for succinct definitions of each category.)

Kano asks participants to rate each concept based on 2 emotional scales… how they would feel WITH the solution and then how they’d feel WITHOUT the solution.

A Kano Case Study

A few years ago, I brought the idea of fielding a Kano study to the product team… and they were game!

To help us generate lots of solutions to the problem we were solving, I first conducted a contextual inquiry study with a dozen participants. During the in-depth interviews (IDIs), I asked participants to walk me through competitor solutions—sharing with me their questions about what they were seeing, their frustrations and delighters, and their ideas for improvement.

Using this input, we developed 22 unique solutions that all solved the same customer problem.

Using the Kano method, I tested these 22 concepts over several days with a different set of 13 participants. I invited the entire product team and key stakeholders to (silently) observe the research study and take notes with me.

After the first day of Kano testing, it was clear that a few of the concepts were total duds, and so we removed them on future test days.

My Kano Research Approach

I structured the Kano as IDIs. All research participants belonged to the same marketing segment (rooted in purchase motivations, attitudes, and product preferences—not demographics).

Each concept included a user-friendly and well-written description of the solution alongside a low-fidelity sketch to help participants visualize the idea.

Tip: At the start of the Kano study, I calibrated participants to the untraditional rating system that Kano uses to ensure they had a correct understanding of it.

Step 1: Show Concept

One at a time, I showed the concepts to the participant—pausing on each one to guage their understanding of the concept, document their ratings, and ask follow up questions.

Step 2: Guage Understanding

To help me guage whether or not the participant had a clear understanding of the concept, I next asked questions like “what questions do you have about this concept?” or “how might you explain this concept to a friend?” If needed, I cleared up any misunderstandings before moving onto the ratings.

Step 3: Rate Concept

Next, the participant rated the concept using the 2 Kano scales (with and without). The rating scales were presented from negative to positive, to avoid left-side bias.

Step 4: Ask Follow-Up Questions

After the rating questions, I asked a few more follow-up questions about the concept to learn how it might be improved for future iterations.

Kano Analysis Methods

To-date, I have uncovered 4 different ways to analyze the results of a Kano study. I tried them all and discovered that combining the DuMouchel and Moorman approaches to be the most effective…

Discrete Method

In the discrete method, simply add up the number of participants who rated a concept as Must-Have, Performance, Attractive, and Indifferent.

Whichever one has the most votes, wins.

I was immediately skeptical of this approach. When I applied the discrete method, all but one concept was rated as “Attractive.”

Timko Method

In the Michael Timko method, satisfaction and dissatisfaction coefficients are plotted on a scale of 0 to 1 on a one-dimensional plane.

After plotting these results, again, almost every concept was identified as “Attractive.” And again, I was very skeptical because it just didn’t align with my experience. I definitely did not observe delighter-level enthusiasm for the majority of concepts.

DuMouchel Method

Next, I tried the William DuMouchel method, which categorizes features on a two-dimensional plane.

I still didn’t feel confident that this analysis method was accurate. Almost all the concepts were clustered together in the same spot on the 2 by 2 matrix. This raised a red flag for me that something was wrong.

At this point, I was getting frustrated. Was it me? Did I conduct the study incorrectly? Maybe running a Kano study wasn’t such a hot idea.

I stewed on it a bit, and then I suddenly remembered that I had watched a talk several years ago by Jan Moorman about her Kano experience. I quickly found the talk again and re-watched it to learn how she conducted Kano analysis.

Moorman Approach

When Moorman conducted her Kano study for the first time, she shared a very similar, twisty path that I had gone down. Luckily, she had an innate sense that the results were “noisy”—and so she came up with the idea to split the data by persona. Voila! Now the analysis made perfect sense to her. She knew there were two distinct personas in her participant pool, those who are savvy and those who are unsavvy about the use of AI in the travel industry.

Fortunately, I had asked each participant some warm-up questions at the start of my Kano study—including some questions about their mental model about the topic I was studying. Similiar to Moorman, I had uncovered savvy and unsavvy personas within my participants, too.

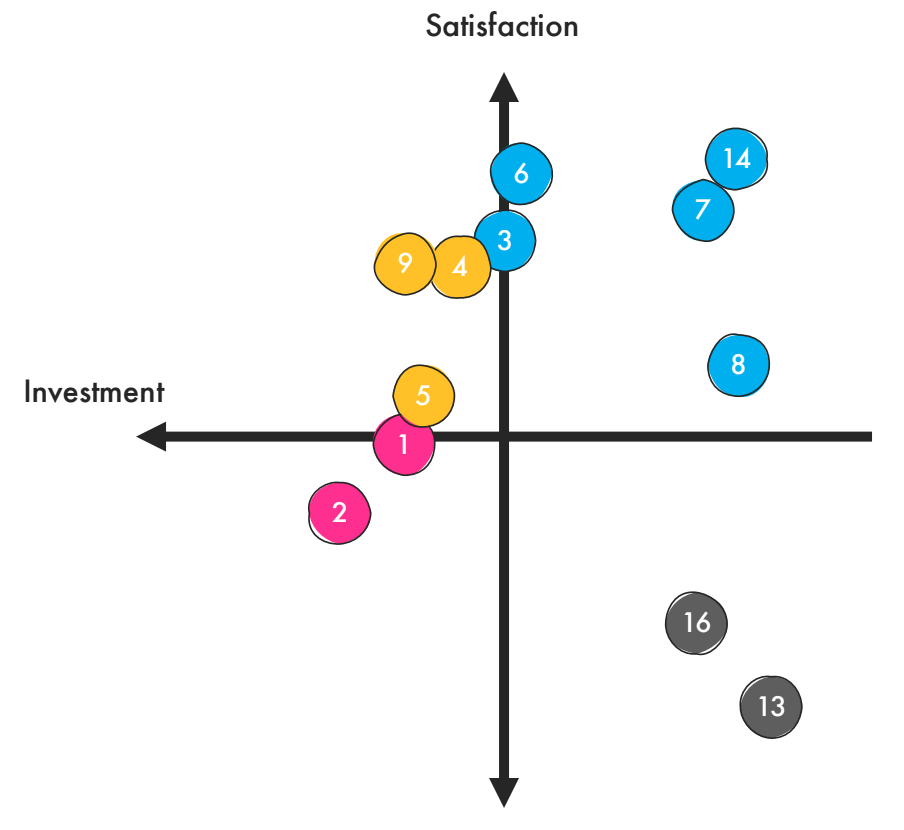

Using Mooman’s approach, I first segmented the raw Kano data by persona, and then I used the DuMouchel method to analyze each set of data.

I was stunned.

Each of the 2 groups had rated the concepts in a completely different way! Here is how the less savvy group rated the remaining concepts:

The less savvy group was overwhelmed and turned off by the more data-heavy and interactive concepts. Instead, they were more drawn to the simpler, non-interactive concepts.

The more savvy group loved the data-intense concepts and the ability to interact with them to gain a personalized experience.

It was a huge light bulb moment for us. Up until that point, we had no idea that approx. half of customers had fundamental misconceptions about the products. (Mental model research, FTW!)

So which concept did we implement? After discussing it as a team, we decided it was best to meet customers where they are (i.e., the simpler, non-interactive solutions).

My Kano Analysis Takeaways

If you think about it, it makes a lot sense why different research participants had completely different and visceral emotional reactions to the same concepts.

“This concept doesn’t align with my needs or my beliefs, therefore, there is something wrong with it.”

Participant who has a negative emotional response

“This concept perfectly aligns with my needs and beliefs, therefore, it feels like it was designed just for me.”

Participant who has a positive emotional response

My #1 lesson learned from Kano…

Take extra care in recruiting and knowing exactly who your research participants are—including their experiences, attitudes, preferences, mental models, etc. If there’s noise in the data, there are likely polarizing and conflicting emotional responses hidden within.